May 5, 2026

AI in Legal Practice

Amy Swaner

On March 30, 2026, a federal magistrate judge in Colorado issued an order in an employment discrimination case most lawyers will never read. They should — but not for the reasons most of the commentary has been suggesting. Morgan v. V2X, Inc., No. 25-cv-01991-SKC-MDB (D. Colo. Mar. 30, 2026), is one of the most thoughtful federal AI decisions issued so far this year. It is also narrower than it is being read to be.

In more than one venue, commentators have turned Magistrate Judge Maritza Dominguez Braswell’s order into a universal AI governance doctrine. It isn’t. What she decided was a discovery dispute about protective-order language in a case where a pro se plaintiff wanted to use public AI tools on documents the corporate defendant had produced under a confidentiality order. Judge Braswell’s reasoning and framework are intelligent and elegant. The reasoning deserves careful attention. But let’s be clear on what Braswell actually held and what it actually means for your use of AI.

Morgan as the Case Study

Background

The underlying case is an employment discrimination lawsuit in the District of Colorado. Archie Morgan is a pro se plaintiff suing his employer V2X. Both sides used AI in their litigation work. V2X has enterprise AI tools. Morgan, representing himself, uses public ones. The AI dispute surfaced when V2X moved to restrict Morgan’s use of public AI platforms — a restriction Morgan argued would create an unfair “technological gap” between a self-represented litigant and a well-funded corporate defendant with proprietary AI and cloud-based systems of its own. V2X also moved to compel Morgan to disclose which tools he was using.

Judge Braswell — who co-chairs her district’s AI Committee, co-founded the Judicial AI Consortium, is on Sedona Conference’s working group (WG13) regarding AI, and is genuinely knowledgeable in this material — did not default to either side’s proposed language. She wrote her own. Before uploading confidential information covered by the protective order to any AI platform, she held, the provider must be contractually prohibited from:

Storing or using inputs to train or improve the model;

Disclosing inputs to third parties (except where essential to service delivery, and then only on terms no less protective than the protective order itself); and

Retaining inputs beyond what is necessary.

She also required that the vendor contractually afford the party the ability to delete all confidential information upon request, and that the party retain written documentation of the contractual protections. And she ordered Morgan to disclose the name of the AI tool he was using, finding that tool selection alone does not reveal mental impressions or legal strategy absent a specific factual record.

That is the entire order. A set of contract requirements and a disclosure obligation, tied to information produced under a protective order. On its face, thoughtful but narrow

What the Judge Decided

Commentary on Morgan has slotted it alongside United States v. Heppner, No. 25-cr-00503 (S.D.N.Y. Feb. 17, 2026), and Warner v. Gilbarco, Inc., No. 2:24-cv-12333 (E.D. Mich. Feb. 10, 2026). That framing puts the three cases into a single bucket labeled “AI and privilege” and treats Braswell as though she were answering the same question as Judge Rakoff and Judge Patti. She wasn’t.

An Everlaw article discussing Morgan noted the procedural posture as material under a protective order being submitted into an AI tool, but then said “the court . . . established a precise set of contractual must-haves for any legal professional looking to integrate AI into their workflow.” Clio went further, headlining its post “Courts Are Starting to Pick AI Tool Winners” and characterizing the order as “a new standard for AI use in litigation”. Respectfully, both are overstatements about the holding and its reach. Heppner and Gilbarco both asked whether a litigant's use of AI waived existing legal protections — traditional doctrinal questions applied to a new technology. The question Braswell answered in Morgan was different and more narrow: in a discovery dispute, what should a protective order say about a pro se plaintiff's use of public AI tools on materials the corporate defendant produced under a confidentiality designation? That is a data governance question, not a privilege question.

As a data governance nerd (or maybe Diva?), this is the same type of question every data protection officer, a cloud governance lead, or a vendor risk analyst asks about any third-party processor. What happens to the data once it leaves my environment? Who can see it? How long does it persist? What rights does the vendor have to use it for their own purposes? Privacy professionals and information governance lawyers have been asking these questions for decades. However, they are more important now, thanks to widespread AI use.

The Applicability of the Order

Braswell decided two things, one of them quite narrow:

1. Work product privilege may apply to an advocate’s AI tool use;

2. A litigant bound by a protective order may not use AI tools on materials covered by that order unless the AI vendor meets her contract-based standard.

That’s it.

She did not hold that all lawyers everywhere must use only AI tools meeting that standard. She did not hold that a solo practitioner using ChatGPT or Claude on legal work is violating Rule 1.6. She did not hold that consumer AI is per se incompatible with confidentiality obligations. She resolved a discovery dispute about protective order language in a specific case with a specific fact pattern.

Granted, Judge Braswell’s reasoning is attractive. The standard is clean, sensible, and maps to real data governance principles. It should inform how lawyers think about AI tool selection generally. But “should inform” and “is binding authority” are different things.

What Judge Braswell no doubt understood was that different data should be treated differently.

Four Data Categories, Four Enforceability Tiers

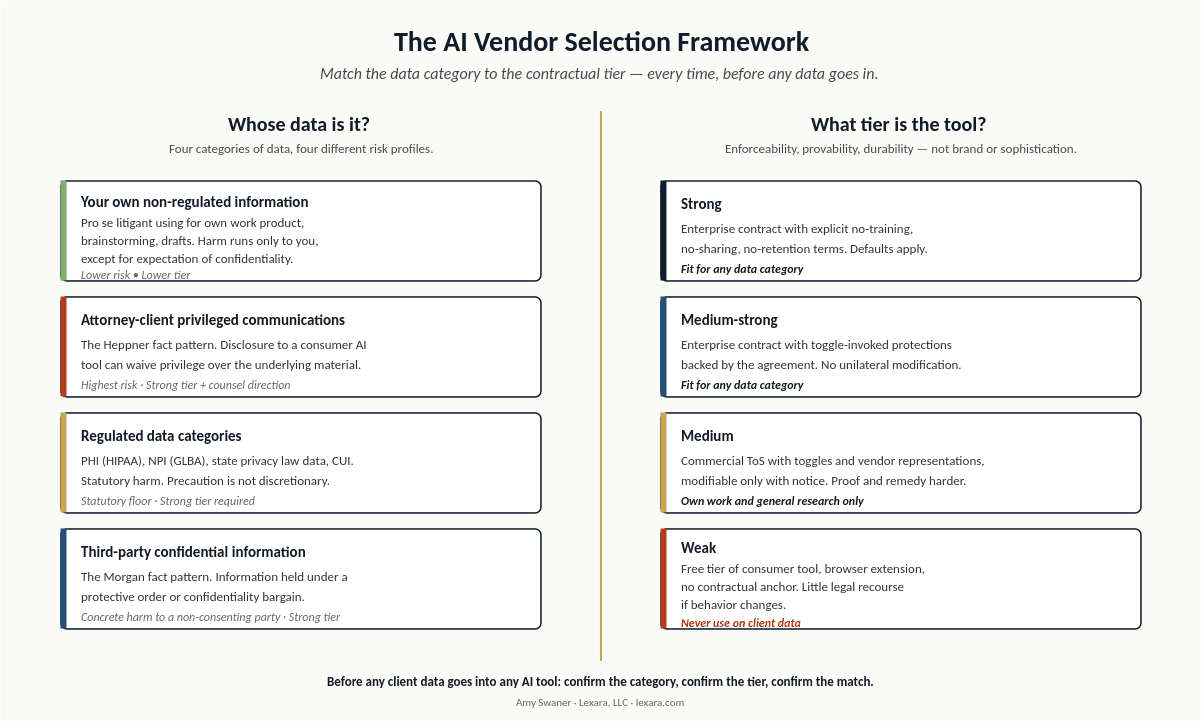

Data governance is everything with AI implementation. One of the first questions with AI Governance must be “Whose data is at risk, what is the risk, and what can I enforce?” Four categories of data behave differently, and the required precaution change accordingly.

Your own non-regulated information. A pro se litigant using ChatGPT to analyze her own employment history and draft her own complaint. The information is hers; the harm from any leakage runs to her and only her. She is entitled to make her own decision. Braswell’s rule would be overbroad in this category — and she didn’t purport to apply it here.

Attorney-client privileged communications. The Heppner fact pattern. Here the harm is potentially catastrophic and irreversible. Judge Rakoff’s reasoning raises the question — left open in Heppner itself — of whether feeding lawyer-provided information into a consumer AI tool risks waiving privilege over the original attorney-client communications. If that reasoning is extended in future cases, the three-part standard may actually be insufficient in this category.

Regulated data categories. PHI under HIPAA, nonpublic personal information under GLBA, personal data under state privacy laws. Rule-governed territory. The harm is statutory. The precaution is mandatory, independent of Morgan.

Third-party confidential information under a protective order or similar confidentiality obligation. This is the Morgan fact pattern. The information was V2X’s, produced to Morgan under a confidentiality agreement. Morgan uploading it into a training-enabled AI tool would unravel the bargain without V2X’s consent. The harm is concrete, the non-consenting party bears it, and Braswell’s rule is proportionate.

The Enforceability Spectrum

Once you know which category your data falls in, you need to know which tier of contractual protection the tool actually offers. Tool choice is about whether the protection is enforceable, provable, and durable. That’s not necessarily determined by brand or cost.

A few concrete examples, as of this writing (vendor terms change; verify before relying):

OpenAI Enterprise and OpenAI API commercial tier, Harvey, Anthropic commercial API and enterprise agreements: default no-training on customer data, contractually committed. Strong tier.

ChatGPT with the “improve the model for everyone” toggled off: enforceable representation, but dependent on consumer terms of service. Medium tier for most use; handle privileged information with care.

A free AI tool, or a browser extension AI tool with no “privacy mode” setting and no visible contract: weak. Do not use with client data.

You should always know which tier you are in, and keep in mind what your firm’s AI policy permits.

Best Practices

Keeping in mind, we only have district court decisions regarding AI at this point. Reading those decisions, six best practices emerge.

Match the tier to the data category. Strong-tier tools are required for third-party confidential information under a protective order, attorney-client privileged communications, and regulated data (PHI, NPI, CUI). Medium-tier tools are acceptable for general research, brainstorming, and non-sensitive drafting. Weak-tier tools belong nowhere near client data.

Audit every AI tool currently in use. Document where the no-training, no-sharing, and no-retention protections are — enterprise terms, MSA, addendum, DPA, or toggle plus vendor representation. Maintain records of what tier each tool occupies and the evidence supporting that placement.

Review your AI policy to map tier to data category. Your policy should expressly address the fact that different categories of data, with different confidentiality needs exist.

Build the spectrum into procurement. Evaluation starts with data processing terms, not features. The right question is not “does the vendor offer a privacy toggle” but “is the toggle anchored in enforceable language, can we prove its state at the time of use, and is the remedy adequate for the data category”.

Counsel should direct AI use whenever the materials may be sought in discovery. The gap between Heppner and Warner turned in part on whether counsel directed the use

Never use a weak-tier tool on client data, regardless of convenience. A browser extension with a “privacy mode” setting and no visible contract is not a defensible choice, and no AI policy should permit it.

The 60-Second Checklist

Before pasting client data into any AI tool, confirm:

Whose data is it? (Yours, the client’s, a third party’s under a protective order, or regulated?)

What tier is the tool? (Strong, medium-strong, medium, or weak?)

Is the tier proportionate to the data sensitivity?

Can you prove the protection state today?

Did counsel direct the use?

If you cannot answer all five, stop. Either move the work to a different tool or build the record before you proceed.

The Bottom Line

Braswell decided a narrow discovery dispute carefully, and intelligently. Braswell’s order illuminated a principle that was already sound. AI tools that ingest, share, or retain user data are not safe for information held under a confidentiality duty owed to others. She set out a framework for when users can put third-party confidential information into an AI tool. It has limited applicability to a lawyer’s own work outside of that factual posture.

© 2026 Amy Swaner. All Rights Reserved. May use with attribution and link to article.

More Like This

Morgan v. V2X Decided a Discovery Dispute. The Commentary Turned It Into Something Bigger.

In Morgan v. V2X, Judge Braswell offers a thoughtful, practical take on AI use in litigation—reminding lawyers (and even pro se litigants like Morgan) that when it comes to confidential data, it’s less about the tool itself and more about how responsibly you handle what you put into it.

Five Takeaways from the First Two AI Privilege Decisions

Early court decisions confirm that using AI does not automatically waive privilege—but failing to ensure confidentiality, proper settings, and attorney direction just might.

Is It Safe to Put Confidential Information in AI Tools?

Using AI with confidential client information is not inherently riskier than the cloud tools lawyers already trust—provided it’s done with the same level of diligence, configuration, and vendor scrutiny.